VMware today announced the vCloud Express. The new type of service allows customers to get on-demand, pay-as-you-go Infrastructure as a Service. Several leading Virtual Service Providers (VSP) like Terremark, Bluelock, Hosting.com and Melbourne IT have adopted this technology and have currently several products in beta. Look here for more information.

Introducing VMware vCloud™ Express

VMware is today introducing VMware vCloud™ Express, a new class of service that will deliver reliable, on-demand, pay-as-you go compute as a service. Built on the industry’s leading and most complete virtualization platform, VMware vSphere™, VMware vCloud™ Express will enable customers to start with VMware vCloud™ Express and grow into full enterprise-class cloud environments with highly available and service level guarantees when they are ready to move into production. Unlike other on-demand cloud solutions, VMware vCloud™ Express will give developers quick access to a pay-as-you-go infrastructure that is compatible with in-house VMware virtualized IT environments—making the interoperability and portability of applications from external development to internal deployment easy. With VMware vCloud™ Express, customers will have the flexibility to leverage IT resources when they need it and pay for only what they use.

VMware vCloud™ Express will be available through many leading service providers including Terremark, BlueLock, Hosting.com, Logica, and Melbourne IT. These service providers are launching beta releases of their services today. To try VMware vCloud™ Express, find your preferred provider by going to: www.vmware.com/vcloudexpress.

“By leveraging VMware’s best-in-breed virtualization technologies and our vast experience providing solutions to enterprise and Federal government customers, our vCloud ™Express offering will provide customers a uniquely differentiated, cost-effective platform that meets their IT infrastructure needs,” said Manuel D. Medina, chairman and chief executive officer, Terremark. “Terremark’s vCloud™ Express services will provide our customers pay-as-you-go, on-demand access to enterprise-class infrastructure that is flexible enough to offer unmatched compatibility with their own internal IT platforms.”

What this really means is that you can now connect your virtual infrastructure, running vSphere, with the VSP(s) of your choice. Expansion of your infrastructure is now available just by going to a website, registering with your credit card and selecting your type of service. No more, no less. You connect your vSphere setup with the service have expanded your virtual capacity. Easy does it!

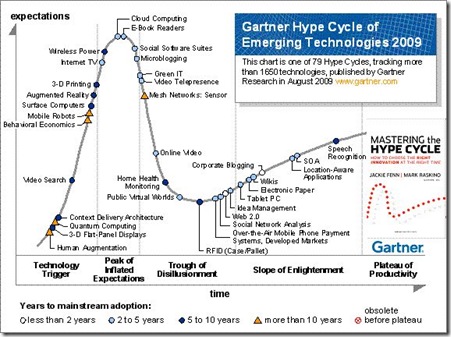

Well not entirely true. It’s still in beta and it won’t be perfect at first. But in general I like the product. It makes Cloud Computing, especially IaaS, more understandable and will help get Cloud Computing out of the “hype cycle” and into the realistic world of IT we live in. It’s not a product supported by one vendor, but also has a broad eco-system of service providers attached to it and it propagates interoperability which, in my opinion, is one of the key factors of making Cloud Computing a success!